What Is nvmedisk.sys?

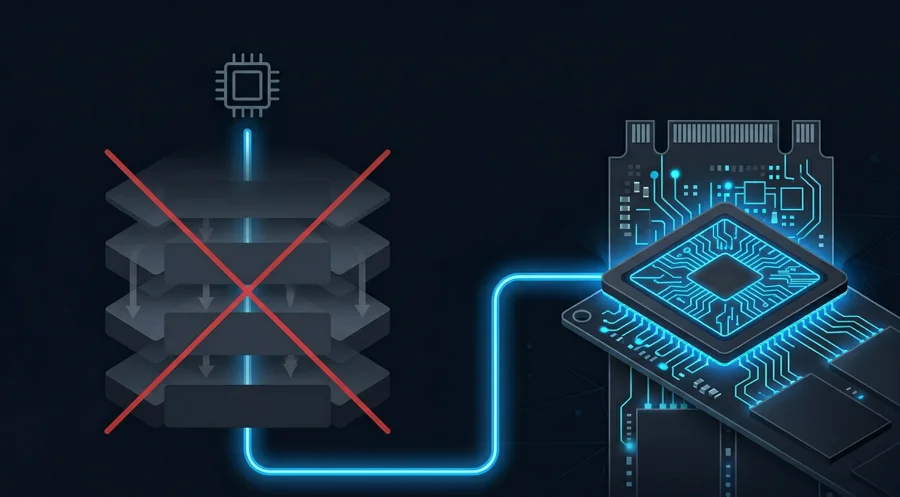

nvmedisk.sys is not just the original disk.sys

with tweaks. Microsoft publicly states that the SCSI translation layer

is removed in the Native NVMe stack, and nvmedisk.sys

appears to be the distinct NVMe-specific driver that

replaces the traditional disk/class stack for NVMe devices.

From driver analysis, it appears to handle many disk/storage queries directly from NVMe-native device state using a different data-path model for read/write I/O.

Architecture & Stack Bypass

From driver analysis, instead of relying on classpnp.sys,

the new driver appears to implement NVMe-specific handling for control

paths — including what looks like its own dispatch table and

special-cased handling for certain disk/storage IOCTLs.

Dual Device Object Design

The driver appears to split I/O handling by storing both the PDO (Physical Device Object) and the attached lower device object in its device extension:

- Read/write requests appear to be forwarded to the PDO-side path — what looks like a fast NVMe-native data path.

- PnP, flush, shutdown, and power requests appear to go through the lower device object.

- Power state snapshots — the driver appears to snapshot the power state before forwarding power IRPs.

I/O Path & Write Cache

Microsoft publicly describes a redesigned I/O workflow with better performance, but does not document the specific write-cache internals. The following observations are from driver analysis.

Write cache handling appears to be NVMe-specific rather than inherited from the generic class driver, with what looks like direct cache-state handling and telemetry for volatile write-cache changes.

nvmedisk.sys appears to manage the NVMe volatile write

cache directly, with what looks like state-transition tracking and

telemetry when cache characteristics change.

Flush and shutdown appear to be forwarded through the attached lower object, as do power state transitions. The driver appears to snapshot the current power state before forwarding — which may suggest cache-safety decisions tied to the power context, though this is not confirmed by public documentation.

Pass-Through & IOCTLs

From initial driver analysis, nvmedisk.sys does

not clearly expose the standard

IOCTL_STORAGE_PROTOCOL_COMMAND pass-through path in

the same way StorNVMe.sys does. It appears to implement

its own control logic around several IOCTLs. However, Microsoft's

general NVMe documentation continues to describe

IOCTL_STORAGE_PROTOCOL_COMMAND as the standard

pass-through interface, so this observation needs stronger

confirmation before concluding the path is absent or replaced.

Performance Implications

Likely Upsides

- Lower CPU overhead per I/O and lower latency, especially for small random I/O, flush-heavy workloads, and high-IOPS/high-QD workloads.

- Better QD scaling is possible with the NVMe-native path.

- Faster metadata and control operations if queries are served from cached NVMe state rather than translated requests.

- Tail latency improvement from eliminating SCSI translation overhead.

- Additional efficiency gains from more efficient flush/cache handling, better namespace-aware behavior, and lower multi-core workload contention.

Potential Tradeoffs

- Compatibility risk for storage utilities/tools and other software that expects the legacy pass-through interface.

- Consistency uncertainty if power/cache policies change under the new driver model.

nvmedisk.sys is a meaningful architectural shift, not

a cosmetic rebrand. Microsoft has confirmed a new NVMe-native disk

driver that removes the SCSI translation layer, and the opt-in

rollout suggests real behavioral differences.

Microsoft-documented facts: Native NVMe is a real opt-in feature in Server 2025 that removes the SCSI translation layer, depends on the Microsoft NVMe driver, and targets meaningful I/O performance gains for NVMe workloads.

Inferred from driver analysis (not confirmed by public

docs): the exact stack relationships shown above, the

dispatch-table and IOCTL specifics, the dual device-object I/O

routing model, direct write-cache telemetry behavior, power-state

snapshotting, and the apparent changes to the

IOCTL_STORAGE_PROTOCOL_COMMAND pass-through path.

For workloads that are latency-sensitive or IOPS-bound, this is the kind of stack cleanup that should deliver real, measurable improvement — and existing benchmarks from third parties support this.