What Is solidnvm.sys?

solidnvm.sys is a Storport miniport that

replaces StorNVMe.sys for supported devices matching

vendor/class-code entries in the INF. The driver appears intended to

coexist with the Windows 11 DirectStorage BypassIO

stack, though explicit Solidigm documentation confirming BypassIO

compliance for solidnvm.sys specifically should be cited

before treating this as established.

Appears to own HMB across init, sleep/resume, unload, and surprise removal.

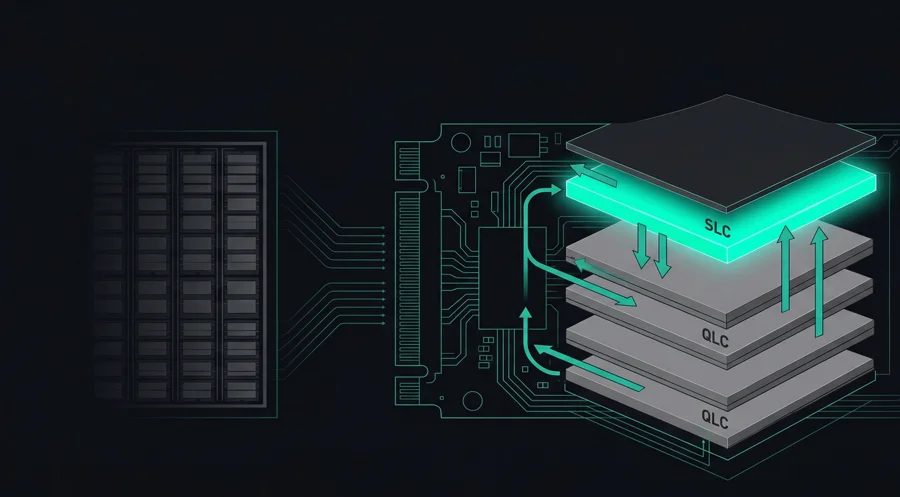

Bidirectional SLC cache with hot QLC data promotion back into the cache.

Stream IDs and dataset management hints to reduce write amplification.

Host Memory Buffer (HMB)

The HMB allocation appears to be DMA-safe, pinned, and cache-coherent from the host side, with IOMMU registration constraining the device's DMA access.

solidnvm.sys appears to handle the HMB in ways

StorNVMe.sys would not — from driver analysis, it

appears to own the full HMB lifecycle across init, sleep/resume,

unload, and surprise removal. HMB size is device-specific.

Based on driver analysis, HMB appears to be disabled before entering NVMe power state PS4 and re-enabled upon PS0 re-entry. If confirmed, this would tie HMB management directly to the APST (Autonomous Power State Transition) implementation.

HMC & Fast Lane

Public Solidigm materials confirm that hot QLC data can be promoted back into the SLC cache ("Fast Lane"), making the cache effectively bidirectional for read and write behavior.

Promotion policy appears to use vendor commands. Possibilities include query pool utilization, promotion thresholds, and pin ranges for user data (like OS boot files).

HMB control · SLC cache policy · Read/write pattern hints · Prefetching behavior · Related query paths

solidnvm.sys appears to annotate standard NVMe reads

and writes with stream IDs and dataset management hints based on

observed access patterns. This should improve placement, prefetch

behavior, and reduce write amplification.

Streams & Data Placement

Write amplification appears to be reduced via stream IDs using the NVMe Streams Directive. Stream IDs allow the controller to place data spatially, which should reduce write amplification and improve QLC endurance.

- NVMe Streams Directive — stream IDs let the controller group related writes physically, reducing garbage collection overhead.

- Dataset Management hints — appear to carry prefetch/overwrite hints, e.g. that a range will be read soon or overwritten.

- Access pattern annotation — the driver appears to observe host I/O patterns and annotate commands accordingly.

Power Management

solidnvm.sys includes an NVMe APST (Autonomous Power State

Transitions) implementation. From analysis, it doesn't appear especially

unusual, though the time ranges are present in the driver.

The key tie-in appears to be with HMB: from driver analysis, the driver appears to disable HMB before entering PS4 and re-enable it for PS0 re-entry, which would ensure the host memory buffer isn't accessed while the device is in a deep sleep state.

Vendor Interface & Pass-Through

Unlike Microsoft's StorNVMe.sys, which gates vendor

pass-through commands against the Command Effects Log (CEL),

solidnvm.sys does not appear to enforce

CEL gating on IOCTL_STORAGE_PROTOCOL_COMMAND. This means

vendor commands may pass through to hardware without the standard

StorNVMe safety check.

Solidigm Management Interface (SMI)

Vendor commands exposed through the SMI include:

- Data transfer — controller read, event log, flash ID, telemetry, latency stats.

- SLC management — allocation, cache policy control.

- Extended admin commands — firmware download/commit, self-test, namespace management, sanitize, security send/receive, format NVM, set features.

Queue Architecture

From driver analysis: standard DMA setup, IOMMU registration, MSI-X distribution, and what appears to be per-CPU I/O queue configuration. If confirmed, per-CPU queue pairs would reduce or eliminate cross-CPU locking on queue activity.

Arbitration & Priority

- Standard arbitration with extensions — the documented path is standard for NVMe, but there may be a vendor-specific extension for Solidigm drives.

- Priority-class weighting — from driver analysis, appears to support four levels at submission time: low, medium, high, urgent.

-

Host-side priority mapping — appears to

be handled through

solidnvm.sys. - Vendor scheduling — possible proprietary scheduling on Solidigm drives, e.g. "queue data to improve performance transparently."

solidnvm.sys isn't just a branded StorNVMe. It adds

coordination between the host and the SSD controller that goes

beyond what the inbox driver provides.

Publicly documented by Solidigm: HMC/Fast Lane, Smart Prefetch, Dynamic Queue Assignment, HMB support, APST, SLC eviction/promotion behavior, hot QLC data management, and tighter host-SSD coordination.

Inferred from driver analysis (not yet confirmed by public docs): detailed HMB lifecycle ownership across power states, specific PS4/PS0 HMB disable/enable behavior, NVMe Streams Directive annotation of standard I/O, Dataset Management hinting beyond standard StorNVMe behavior, per-CPU queue pairs, and four-level priority-class weighting.

- Solidigm Synergy 2.0 Driver User Guide

- Solidigm Synergy 2.0 Technical Reference Manual

- Solidigm Synergy 2.0 White Paper

- P41 Plus Product Brief

- Solidigm P41 Plus Product Performance Evaluation Guide

Tracing how SynergyCLI and solidnvm.sys communicate

with the drive. Available methods with two P41 Plus drives:

ETW/StorPort tracing while running SynergyCLI, capturing

IOCTL_STORAGE_PROTOCOL_COMMAND calls, WinDbg, or a

combination. Solidigm uses WPR/WPA for workload analysis, which is

ETW-based but not the same as directly tracing the driver.